In the last episode, I got the Sato SDK Yocto image running on my MinnowBoard, and explored some of the debug and trace tools therein. This week, I took a side trip to see if I could get the Yocto image running within Oracle’s VirtualBox.

I guess you could say that I’m easily distracted.

In Episode 33 of the MinnowBoard Chronicles, I celebrated getting the Sato SDK image running on my MinnowBoard. If you go back several episodes, you can see that this has been a long journey; since this is a part-time gig, I haven’t been able to spend as much time on it as I like, and had to overcome several road bumps along the way; not the least was RMA’ing the MinnowBoard itself in Episode 24, and RMA’ing my build machine’s Ryzen 1700X due to early silicon segmentation faults happening during Yocto builds as documented in Episode 28.

By the way, the best way to read all of these earlier episodes is to go download The MinnowBoard Chronicles eBook (note: requires registration – reading each of the episodes separately doesn’t, though).

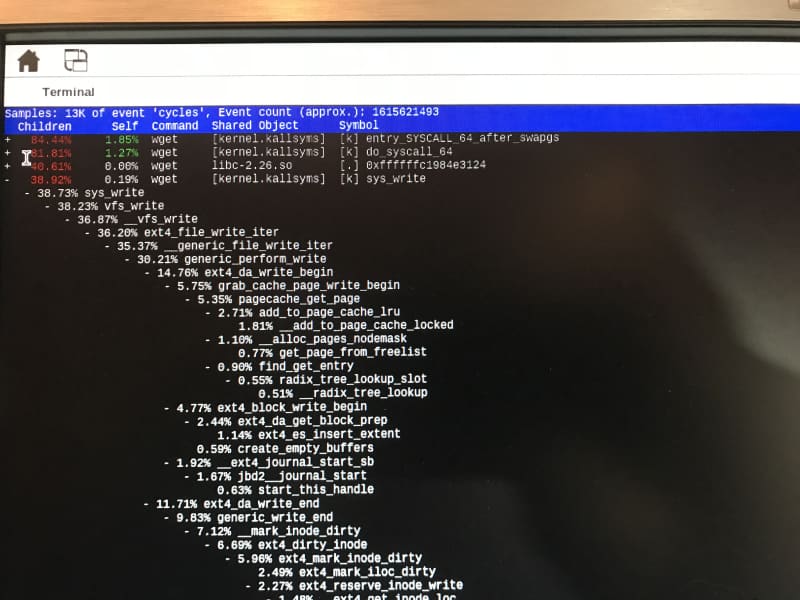

What a joy it was to get the build completed and be able to run the Sato SDK image on the MinnowBoard. I was then able to fire up some of the Linux kernel debug and trace tools, such as “perf”. It was fascinating to see the call tree generated by a simple command such as “perf record wget http://downloads.yoctoproject.org/mirror/sources/linux-2.6.19.2.tar.bz2” followed by “perf report”:

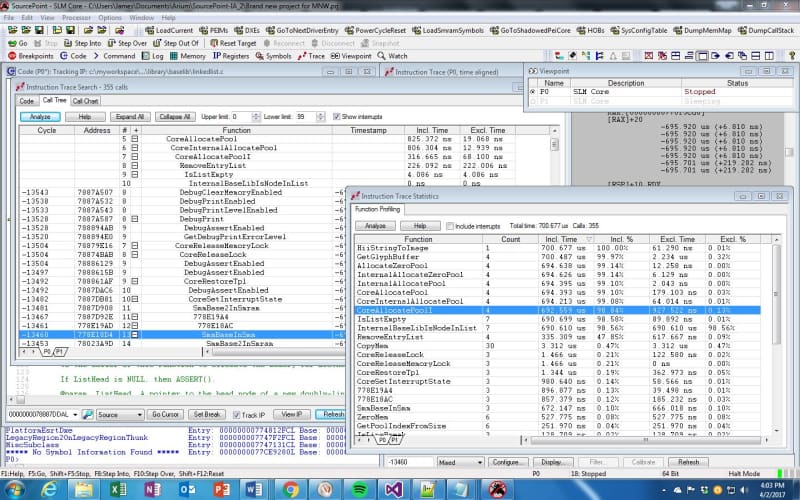

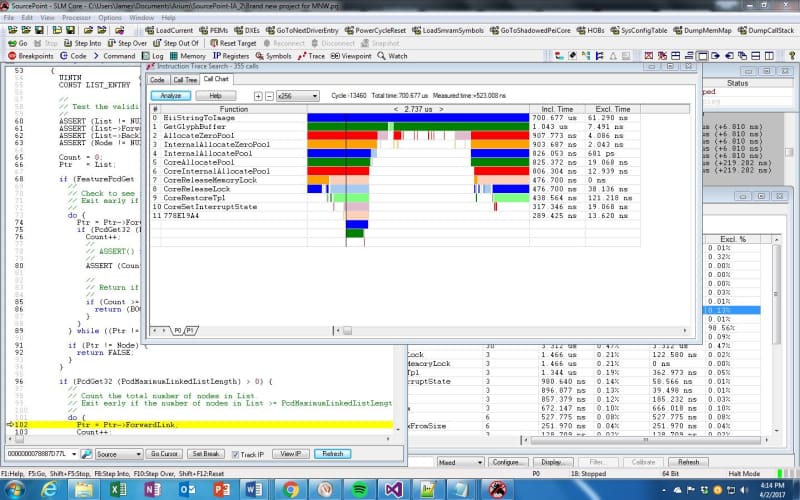

What I was really interested in was to compare the call tree capabilities of perf versus our SourcePoint tool. It generates call trees and call graphs with even more information, as shown below:

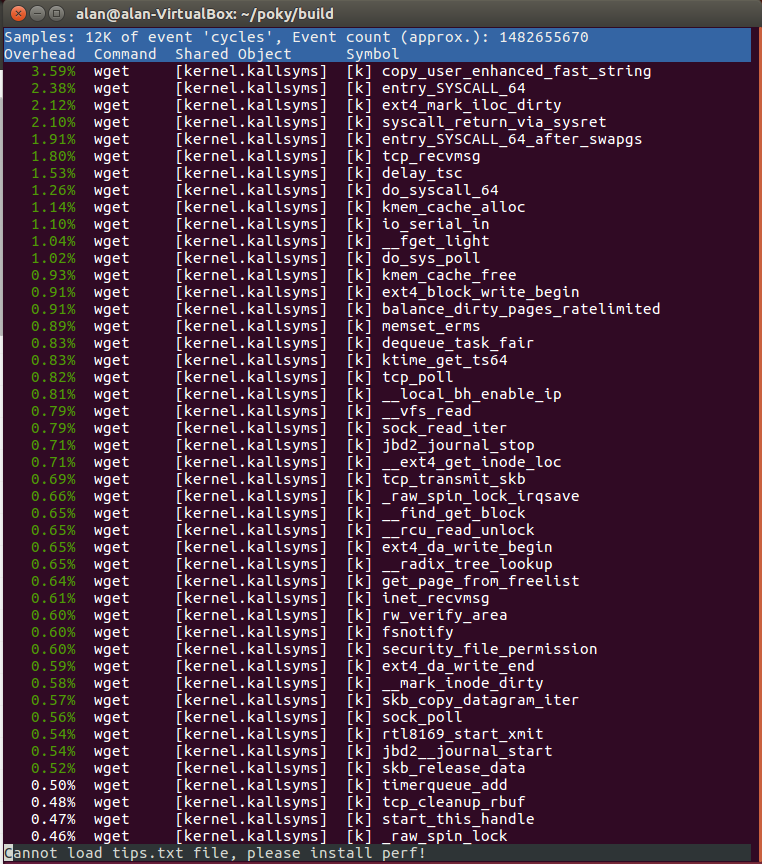

As I read about the debug & trace tools in the Yocto Mega-Manual, I noted that a lot of them involved SSH’ing into the target from a remote host. I tried that, and it worked right away, just following the steps in Beginner’s Guide To Setting Up SSH On Linux And Testing Your Setup. I fired up my Ubuntu VM, made sure the OpenSSH server and client were installed, and successfully set up the connection. Note that the username on the MinnowBoard is “root”, with no password. I did the “perf record” and “perf report” same as I did before, and in the Ubuntu VM running on Windows, it behaves the same (better, actually, because I’m in a nice Linux + Windows environment and I have access to a lot of services there – for example the Windows Snipping Tool):

This will be useful in the upcoming weeks as I dive into remote Linux kernel debug.

So, back to being distracted: out of the blue, I got to wondering if it were possible to boot the Sato SDK image into VirtualBox, instead of running it on the MinnowBoard. The VirtualBox VM emulates an Intel platform, and when I did the Yocto build, it was just targeted to a generic intel-corei7-64. Would it just work? I decided to find out.

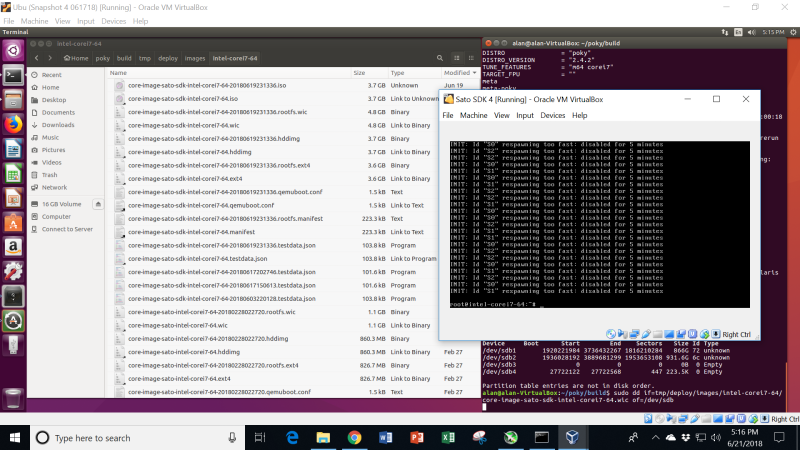

The trick about VirtualBox is that it wants to take an image as, for example, a .iso file, instead of the .wic that has been normally generated in my builds. So, I had to figure out how to get the image out in the format I needed. This took a little while, and some digging in the Yocto Mega-Manual and on the web.

I’ll tell you the two tricks:

Firstly, you must create a .iso version of the image, for it to be accepted by VirtualBox. You do this by editing the local.conf file and adding these two lines:

IMAGE_FSTYPES += “live”

NOSIO=”0”

Secondly, because I’ve created very few virtual machines under VirtualBox up to now, I forgot that if you want to load the image from a USB stick (remember just to copy the .iso file from poky/build/tmp/deploy/images/intel-corei7-64 over to the stick, and not use the “dd” command), it is best to:

(1) copy the .iso file onto your hard drive

(2) Create the new VM, using Type “Linux” and Version “Other Linux (64-bit)

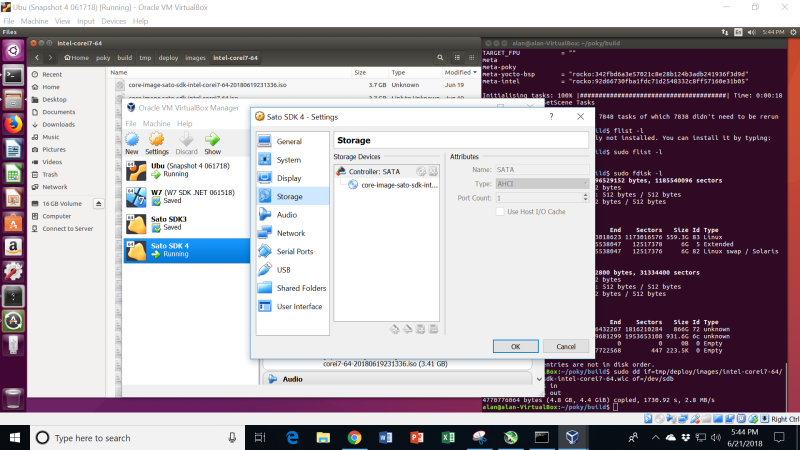

(3) Click on Settings > Storage

(4) Add a SATA new storage controller

(5) Add an optical drive to that controller

It looks like the below:

Phew! Finally got the new Sato SDK VM loaded.

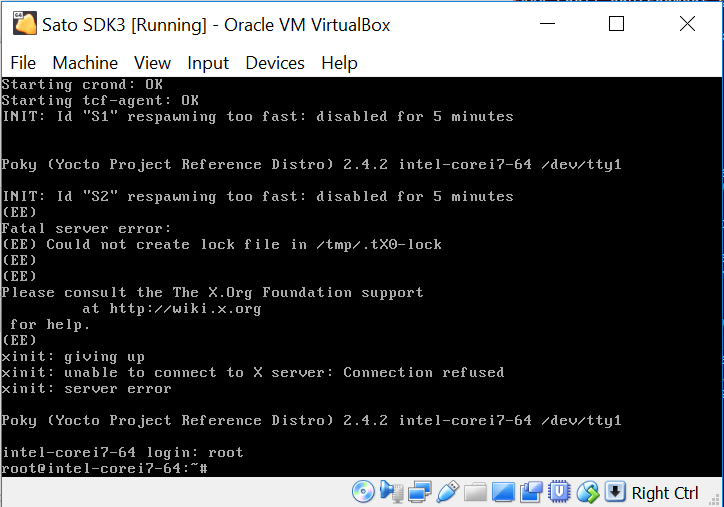

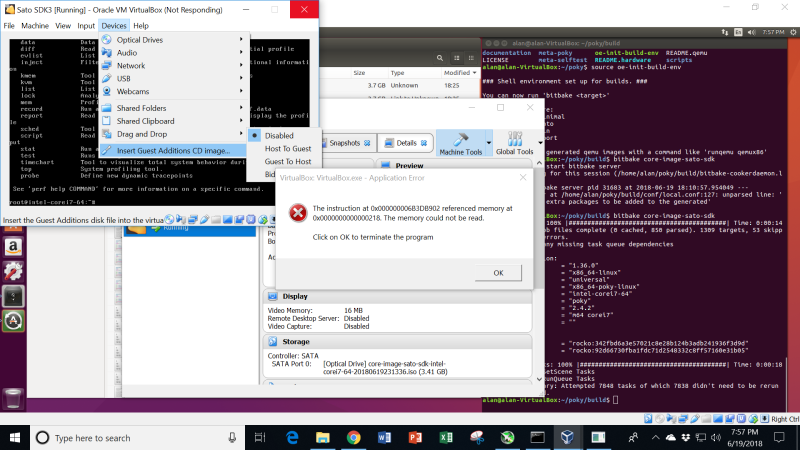

There were a bunch of error messages spun out when I did manage to launch the virtual machine:

When I finally got the Sato SDK running stably under VirtualBox, you can see some incompatibilities. In the diagram below, it seems to think that Id’s S0, S1 and S2 are respawning too quickly:

I didn’t dig into this too much. Other than those error messages, and the fact that the screen doesn’t resize itself when the VM window is changes, the build behaves reasonably. I didn’t thoroughly test it, though. I decided that it’s better to run things down on the MinnowBoard target, and ssh into it as needed. Virtual machines, after all, are only virtual; there’s no substitute for the real thing.