Testing 3D chips | JTAG IJTAG and IEEE P1838 – Part 2 of a three-part series

In Part 1 of this three-part blog series, I introduced various developments that have brought the industry to the current state of affairs with regards to 3D chip test. I also introduced the PCOLA board test methodology and explained that it could be applied to 3D chips. In addition, the work of the IEEE P1838 working group, which is developing a standard for 3D chip test, was discussed. So, where do we go from here? In this blog we’ll take a close look at the current state-of-the-art regarding 3D chip test. Specifically, we’ll look at how a high bandwidth memory (HBM) stack is tested.

The 2.5D and 3D devices in production today are mostly memory chips like HBM. Since the standards for memory chips fall under the auspices of JEDEC (Joint Electron Device Engineering Council), any test features inherent in HBM are included in the standard that defined HBM, the JEDEC235 standard. This standard incorporated a subset of the IEEE 1500 embedded core test standard as its test methodology for HBMs.

Because memory die stacks are mostly homogeneous – that is, the stack is made up of the same identical die from the same provider – they are easier to fabricate than heterogeneous stacks comprised of different die from different providers. As a result, homogenous memory stacks have been delivered to market sooner than heterogeneous stacks. Debugging and testing heterogeneous 3D die stacks falls under the IEEE P1838 standard, which is currently under development.

Some concerns have been expressed in the industry that there could be incompatibility issues with test features if a memory stack with IEEE 1500 features is interfaced with 3D IEEE P1838 die stacks. Others are concerned about whether a homogenous memory stack could support features that would allow it to be tested with the PCOLA/SOQ/FAM board test method.

3D Memory Test Features

In reality, 3D memory stacks are more testable than the single-die memory chips that are usually soldered onto circuit boards. HBMs, for example, typically include the IEEE 1500 architecture to enable test scans around the boundary of the chip as well as all of the data and control signals. In addition, IEEE 1500 provides a centralized Instruction Register (IR) and Test Data Registers (TDR) to supply built-in self-test (BIST) and built-in self-repair (BISR) features. However, the IEEE 1500 architecture in HBMs only incorporates the TDR and IR signals from the IEEE 1149.1 JTAG standard without including JTAG’s Test Access Port (TAP) and its finite state machine (FSM). So, a memory die or a 3D memory stack that supports IEEE 1500 supports the following interface signals from 1500: WRCK (wrapper clock), ResetN (wrapper reset), ShiftWR (shift wrapper), CaptureWR (capture wrapper), UpdateWR (update wrapper), WSI (wrapper scan in), WSO (wrapper scan out), and SelectWIR (select wrapper instruction register).

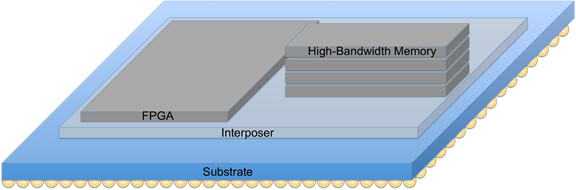

In the case of HBM, two memory arrays and two IEEE 1500 wrappers are deployed on each memory die. Four die comprise an HBM stack for a total of eight memory arrays and eight 1500 wrappers. Having multiple memory die in a stack would make HBM a true 3D stack, but since the die stack is then placed on an interposer with another chip, a memory management unit (MMU), an HBM device is considered a 2.5D architecture because the MMU and the interposer are not considered in the stack of memory die. The interposer provides much faster and many more interconnects between the MMU and the memory stack than would normally be possible by simply soldering packaged memory chips on a board. An HBM made up of an interposer, MMU and a stack of memory die is considered one packaged chip. (Figure 1)

Figure 1: Example of a HBM in a 2.5D architecture with an MMU implemented in an FPGA

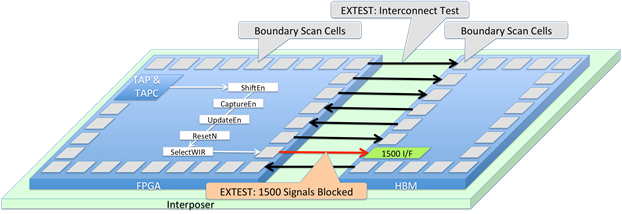

As it turns out, this type of architecture has problems. Without getting into too much detail, suffice it to say that the MMU and HBM could not accomplish a simple EXTEST interconnect test between the MMU’s IEEE 1149.1 boundary scan and the JEDEC-mandated 1500 wrappers around the HBM memories. The problem occurs because no accommodation was made to deliver the IEEE 1500 control signals to operate the HBM’s 1500 interface. Since there is no JTAG TAP, TAP controller, and therefore no TAP FSM in the HBM, the HBM cannot operate the 1500 interface. This means that the MMU needs to source the necessary 1500 operation signals. The problem happens when the EXTEST instruction is installed in both the MMU’s IEEE 1149.1 JTAG IR and the HBM’s IEEE 1500 WIR, and is then asserted (updated). At this point, the MMU freezes the IEEE 1500 control signals because they need to pass through the boundary scan register of the MMU. (See Figure 2.) EXTEST forces all outputs to take on the value held in the update cell of a boundary scan register.

This problem has come about because the FPGA die in the HBM incorporated a generic IEEE 1149.1 boundary scan implementation, and did not support the sequestering of a number of signals that were exempt from the effects of the BSR and which are needed to drive 1500 signals. Standards like IEEE 1149.1 JTAG do not accommodate the exporting of a IEEE 1500 interface.

There is also a secondary problem with this configuration. Although the JTAG TAP controller in the FPGA will generate signals for the operation of the JTAG registers within the FPGA, it will not generate signals targeted outside of the FPGA. In fact, it may not be able to generate signals for the HBM’s IEEE 1500 interface at all. Some TAP controller signals are matched to the JTAG Test Data Register (TDR) cells within the chip. These signals are ‘gated-TCK signals,’ consisting of a ShiftEnable gated with TCK to make a ShiftClock type of signal. The signals distributed across the chip are ShiftClock, CaptureClock, and UpdateClock signals as opposed to the TCK signal itself. Other TAP controllers may deliver ‘synchronous data-enable’ types of signals where the TCK signal is distributed as a clock along with the ShiftEnable, CaptureEnable, and UpdateEnable signals. To operate an IEEE 1500 interface at the edge of a chip or die, as in this case, synchronous data-enable signals are required.

Neither IEEE 1500 nor the IEEE 1149.1 JTAG standard define how to generate ShiftWR, CaptureWR, UpdateWR, ResetN, and SelectWIR signals and distribute them off the chip where they originated and avoid that chip’s boundary scan cells. In this case, the signals would be distributed off-chip onto the interposer in the 2.5D device. No standard defines how many loads these signals are expected to drive and their expected performance on a board or interposer. The only specification available would be the signal input or drive requirements contained in the IEEE 1500 standard.

Figure 2: Example of a 1500 interface being blocked during an EXTEST interconnect test

As a result of this situation, operating the HBM’s IEEE 1500 interface would require signals generated in one chip that could drive the HBM die’s input. This may require another TAP controller in addition to the device’s primary TAP controller. This secondary TAP controller would be implemented in the MMU to drive signals for the HBM 1500 interface. With these signals the HBM can be placed in any of several test modes, memory BISTs can be operated and memory self-repair can even be activated as long as the MMU providing the 1500 control signals is not in EXTEST, CLAMP or possibly HIGHZ. Note that for the problem shown in Figure 2, the solution was to generate a second TAP and TAP controller in the FPGA/MMU and to create a shadow boundary scan register that does not wrap the 1500 control signals with boundary scan cells. This shadow boundary scan register consumes a large amount of the FPGA’s register resources.

Regardless of whether the MMU is an FPGA, ASIC or SoC, or whether the stacked device is 2D, 2.5D or 3D, these issues must still be addressed. An example of this might be to include a non-registered mode for a section in the boundary scan ring in the design of an ASIC or SoC. This would effectively not wrap the IEEE 1500 control signals during EXTEST, HIGHZ, and CLAMP. Still, the signals must be the correct type of signals and they must be designed to exit the die/chip. As mentioned, this sort of master chip interface for 1500 is not defined in any standard. If it were, it would address the following:

- Where are the Shift, Capture, Update, Reset, SelectWIR, TCK, TDI, TDO signals sourced? Are they generated by the primary TAP controller, a secondary TAP controller or a custom IEEE 1500 TAP controller? Does a secondary TAP use the same control signals as the primary TAP?

- What type of signals are generated? Are they synchronous data-enable signals or some other type?

- How many drive loads are on the master chip IEEE 1500 interface and what are the electrical and timing characteristics of this interface?

Another related effort began well over a year ago to define the IEEE 1687 IJTAG interface produced by a chip’s IEEE 1149.1 JTAG TAP controller. This effort stalled, but it could be revisited and extended under a new IEEE Project Authorization Request (PAR) to also address the need for a IEEE 1500 master controller interface as well.

In a sense, this example shows how problems can develop with a heterogeneous 3D die stack. Although the memory die in this example are homogenous, the MMU and interposer could be seen as heterogeneous members of a 3D stack. In this case, the homogeneous memory die support a JEDEC-mandated IEEE 1500 test architecture while the heterogeneous members of the device do not. Without specifically defining a 1500 master controller interface in the IEEE P1838 3D chip test standard or some other standard such as IEEE P1149.1.1, placing HBM memories in a 3D stack will result in a loss of test coverage in the 3D stack.

This brings us to the question I posed in the first blog in this series. Can the PCOLA/SOQ/FAM board test methodology be applied to 2.5D or 3D die stacks as if they were 2D circuit boards? The example I discussed in this blog focused on incorporating HBM memory in a stacked device. If the PCOLA/SOQ/FAM test methodology could be applied to such a stack, several questions come up: Is there a way for a stack assembler to assess whether all of the die are present (the P in PCOLA)? Whether the die in the stack are the correct (C) die? That they are oriented (O) correctly? That each die is live (L) with power? That there are no shorts or opens (PCOLA/SOQ) on the die interconnects?

With the IEEE 1500 architecture and resources within the HBM and assuming that the interconnect test issues described earlier have been resolved, then the WID (ID Code as defined by 1500) can be used to verify the presence of the die in the stack and whether those die are correct. EXTEST can be used for finding shorts and the opens. Operating HBM BIST can help determine whether the memories are receiving power.

The ‘A’ in PCOLA/SOQ/FAM refers to alignment and the ‘Q’ to solder joint quality. Both of these metrics can only be determined on circuit boards through visual inspection, requiring X-ray or another method. This will likely also be the case with a 3D stack. An on-chip BIST could provide the ‘F’ or functional test and also verify the ‘A’ or at-speed performance which would in turn produce an ‘M’ or measurement on the device.

Of course, the real bottom-line question is: Is meeting the requirements of PCOLA/SOQ/FAM enough? I’ll explore this in the final blog in this series in a couple of days.

NOTE: Al Crouch is a member of ASSET’s consultation team. If you think Al’s expertise would be helpful to you, click here for more information.