In a previous blog, we touched on some of the key attributes of Intel QuickPath Interconnect versus PCI Express Gen3, to explain why the acceptable bit error rate threshold of Intel QPI is two orders of magnitude lower. This article elaborates on how some of the key design features of QPI contribute to this more stringent requirement.

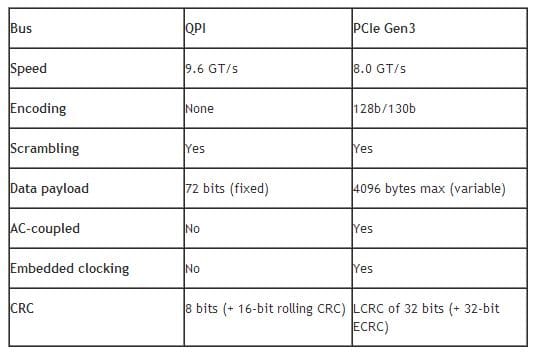

The bit error rate (BER) threshold – the point beyond which the protocol is not guaranteed to run reliably – of QPI is 1 error in 10E14 bits. The BER threshold of PCI Express Gen3 is 1 error in 10E12 bits. Some of the background behind this is in the following table, which is excerpted from my blog at Bit Error Rate of Intel QuickPath Interconnect – Part 1:

Designing any protocol obviously involves many trade-off decisions between speed, throughput, power consumption, silicon footprint, error recovery, and many other factors. So, there may be more than one “right” design decision to support the different applications which a given interface is expected to support.

Let’s examine some of the key differences between QPI and PCIe and see how they related to and affected the required BER thresholds.

Trace Length

QPI is a point-to-point chip-to-chip interconnect between Intel processors. The trace length between devices is intended to be in the range of twelve inches or so. PCIe Gen3, on the other hand, can support a long channel length of ~20 inches. The shorter range of QPI allowed for an expectation that the transmission medium would be “cleaner”, and therefore support a lower bit error rate. This permitted other design decisions to be made to enhance QPI’s throughput and deterministic behavior.

Encoding

Encoding is a mechanism whereby a data bit stream is encapsulated into characters or symbols. For example, in 8b/10b encoding, for eight bits of data, the lower five bits of data are encoded into a 6-bit group (the 5b/6b portion), and the top three bits are encoded into a 4-bit group (the 3b/4b portion). The purpose of encoding is to achieve DC balance and bounded disparity (the difference between the number of 1s and 0s transmitted), while still providing a sufficient number of state changes to allow reasonable clock recovery.

PCIe Gen1 and Gen2 use 8b/10b encoding, which carries with it a 20% overhead penalty of data throughput versus bit rate. PCIe Gen3 moved to a 128b/130b encoding scheme, which allowed a doubling of throughput while holding the increase in line rate to 60%. PCIe Gen3 sustained an acceptable level of clock recovery through "scrambling", which applies a known binary polynomial to the data stream in a feedback topology. Because the scrambling polynomial is known, the data can be recovered by running it through a feedback topology using the inverse polynomial. Scrambling reduces the instances of consecutive individual digits (CIDs), thereby enhancing clock recovery circuitry’s ability to lock and hold.

It is worthwhile to note that QPI uses scrambling as well.

Embedded Clocking

QPI does not use clocking embedded in the data stream, but rather has a “forwarded clock” which requires an additional differential pair on the link. This eliminates the need for encoding, which in turn increases its throughput. The receiver creates a set of derived strobes, one for each lane, which along with link training information is used to place the strobe point in the middle of the received data window. This enables much higher data rates versus embedded strobes which themselves undergo signal degradation across the physical channel.

The trade-off, of course, is that skew requirements of data lanes relative to each other, and to the strobe sources, have to be taken into consideration. This is turn can result in the BER threshold requirement of QPI to be lower.

It should be noted that QPI will periodically retrain, to re-establish strobe synchronization. Strobe drift occurs naturally due to long-term changes in the system, from temperature and voltage-related effects, among others.

CRC

CRCs use polynomial arithmetic to create a checksum against the data it is intended to protect. The design of the CRC polynomial depends on the maximum total length of the block to be protected (data + CRC bits), the desired error protection features, and the type of resources for implementing the CRC, as well as the desired performance. Trade-offs between the above are quite common. For example, a typical PCIe Gen3 packet CRC polynomial is:

x32 + x26 + x23 + x22 + x16 + x11 + x10 + x8 + x7 + x5 + x4 + x2 + x + 1

The PCIe Gen3 CRC-32 for the TLP LCRC will detect 1-bit, 2-bit, and 3-bit errors. 4-bit errors may escape detection. Bit slips or adds have no guarantee of detection. “Burst” errors of 32 bits or less will likely be detected.

For QPI, the 8-bit CRC can detect the following within flits:

- All 1b, 2b, and 3b errors

- Any odd number of bit errors

- All bit errors of burst length 8 or less

- Burst length refers to the number of contiguous bits in error in the payload being checked (i.e. ‘1xxxxxx1’).

- 99% of all errors with burst length 9

- 99.6% of all errors of burst length > 9

QPI’s 8-bit CRC is smaller, but has a smaller fixed-size payload to protect. PCIe Gen 3 has a larger, variable-sized payload, and thus its CRC needs to be 32 bits, to accommodate a similar level of error detection. It would appear that the error-detection capabilities of PCIe Gen3 are somewhat greater than that of QPI. But, having a lower BER threshold for QPI relieves it from having a more stringent CRC.

In summary, many of the fundamental characteristics of QPI’s design, including trace length, lack of encoding, forwarded clocking and its CRC, dictate that it should have a BER threshold two orders of magnitude lower than that of PCIe Gen3.

One Response

Thanks Alan!

It’s been noted elsewhere that BER as used here should be written out as Bit Error Ratio (the unitless ratio of bits in error over bits in total) rather than as Bit Error Rate (the number of bits in error over time, expressed in bits per second, bps) …