PCI-SIG specifies a bit error rate (BER) threshold of 1E-12 or better for PCI Express 3.0. What might cause this threshold to be exceeded, and what happens if it is?

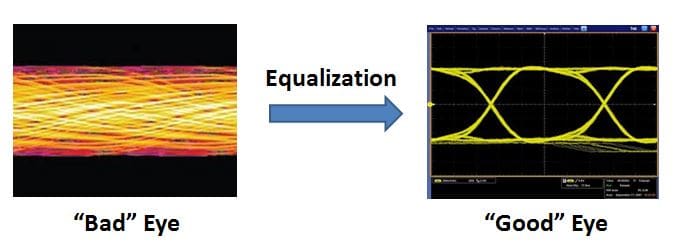

PCIe Gen3, like many high-speed serial interfaces, is intended to deliver robust performance even in the presence of defects and other adverse conditions on a link. In particular, at the physical layer, equalization schemes are used to compensate for interference due to the effects of board traces, connectors, stubs, and other factors. At the 8GT/s speeds of PCIe 3.0, physical measurements at the receiver typically show a completely closed eye diagram. Equalization is used to open the eye and improve the performance of the link.

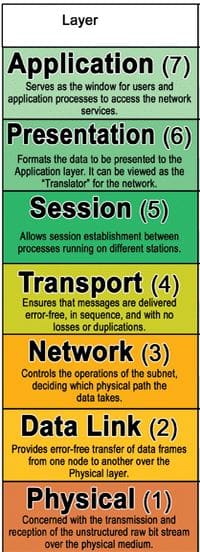

Firstly, to understand the importance of equalization, let’s first delve into why it’s needed. There is no error checking at the physical layer for PCIe; the specification assumes a “perfect” transmission medium, and leaves error checking to the upper layers of the OSI stack:

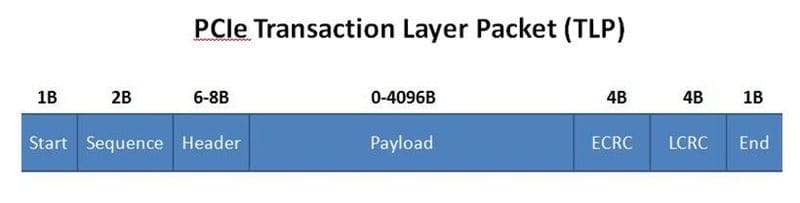

For PCIe, transaction layer packets (TLPs, or data packets) in the Data Link Layer are protected by link CRC (LCRC). This 32-bit wide CRC protects the large, variable-sized payload (not including the framing start/end bytes). The end-to-end CRC (ECRC), if used, provides some level of checking for different link hops up at the PCIe Transaction layer (which, mapped to the OSI model, is a combination of the functions within the Network and Transport layers.

When any sort of an error is encountered, all upper layer parts of the stack are put on hold until the error is rectified, if this is possible. A NAK data link layer packet (DLLP) is sent to the transmitter, and the TLP replay buffer kicks in until success transmission is accomplished.

Implementation schemes differ by silicon vendors, but past certain thresholds, physical layer re-initializations will attempt to restore the link. Of course, user traffic is suspended during this process when the link is recovered by the Link Training and Status State Machine (LTSSM).

When errors escape the lower layers (note from an earlier blog that CRCs are not perfect), they may yet be picked up at the transport layer, via erroneous flow control updates. Again, the link may need to enter recovery, dependent upon the severity and frequency of errors.

So, depending on the frequency and nature of bit errors, the system may display any of the following symptoms:

- Lowered throughput/performance

- Errors are logged

- Drivers do not load properly

- Speed degrades

- Link width degrades

- Intermittent dropouts

- Surprise Link Down (“SLD”)

- Device Not Found (“DNF”)

- System crash/hang (“blue screen”)

The higher the bit error rate, the more severe the symptoms on a design. A drop in system performance may be just an annoyance or even invisible to an end user; link width degradations may cause a level of customer dissatisfaction; but SLDs, DNFs and crashes are partial or total system outages, which can have a major negative impact.

So, equalization plays a very key role in improving the margin of the link and managing its bit error rate. For silicon receivers, this is accomplished by fixed programmable equalization (i.e. Continuous Time Linear Equalization, or CTLE) and adaptive equalization (i.e. Decision Feedback Equalizer, or DFE). Adaptive equalization, or “tuning”, can be done when the rate (i.e. 2.5GT/s, 5GT/s or 8GT/s) is first entered, on every electrical idle exit, on every rate change, or continuously. Of course, having adaptive equalization run continuously can increase transceiver power consumption significantly, by up to 2X. But, adaptive equalization improves system margins in the presence of silicon high volume manufacturing process variance, corners of voltage and temperature, humidity, silicon aging, and a myriad of potential board and chip defects and variances.

For more theory behind this, please see our white paper, Detection and Diagnosis of Printed Circuit Board Defects & Variances.